Why Traditional Governance Falls Short

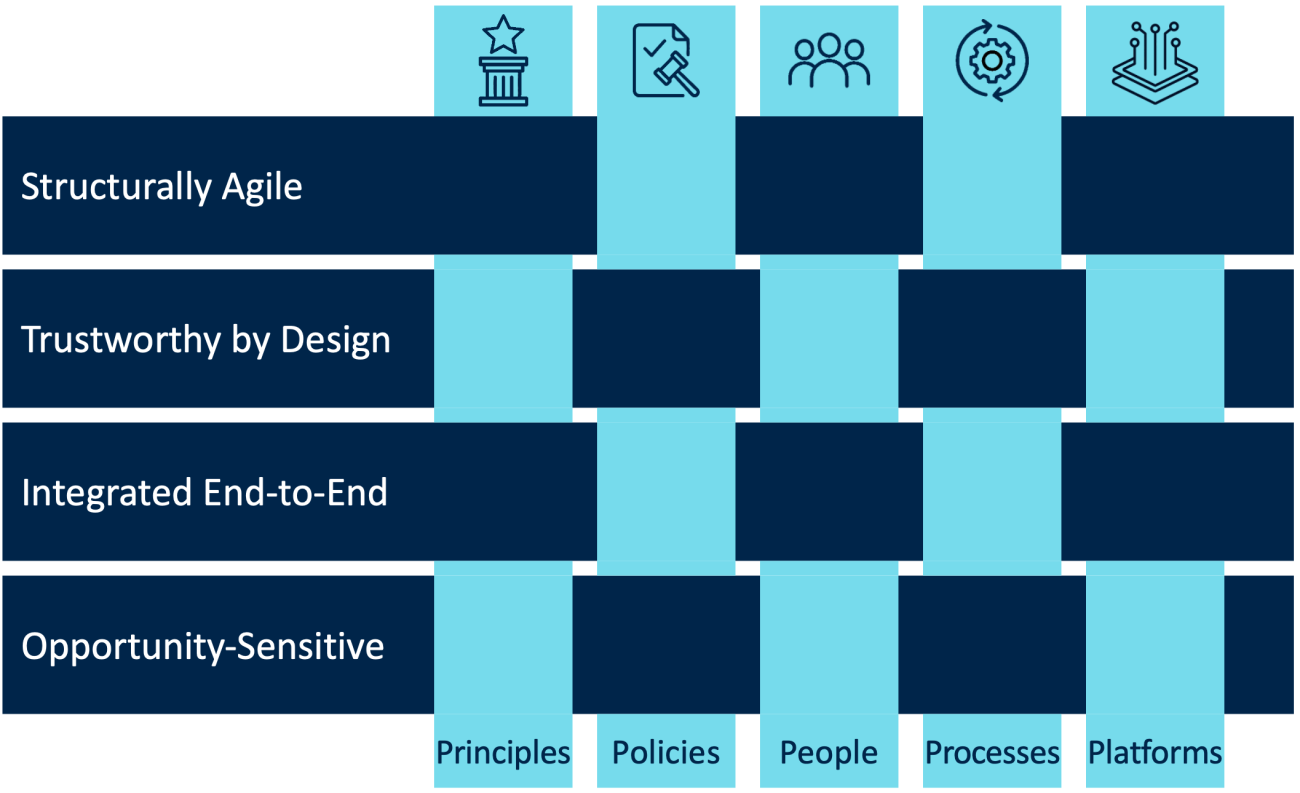

Organizations govern technology through mechanisms that can be organized into five domains: principles, policies, people, processes, and platforms. Principles articulate guiding values; policies translate those values into enforceable rules. People are organized in structures with clear decision rights. Processes define repeatable workflows. And platforms provide the infrastructure to enforce governance and monitor the use of technology at scale. When leaders perceive a governance gap, the instinct is to fill every domain as quickly and comprehensively as possible—which carries its own risks.

Consider the experience of FinCo, a global diversified financial services firm.[foot]FinCo is a pseudonym. By protecting the organization’s identity, we can share lessons from the firm’s experience candidly. The authors conducted interviews with seventeen leaders at this organization in 2025.[/foot] When GenAI emerged in late 2022, employees across FinCo’s federated business units quickly began experimenting with it independently—drafting client communications, summarizing reports, generating marketing copy—using unsanctioned GenAI tools. Risk-sensitive executives became alarmed. In an industry where data breaches and regulatory violations carry existential consequences, the executives moved swiftly to establish formal governance.

FinCo’s response was broad in scope, with the firm taking action in all five of the governance domains. Corporate data, IT, and ethics units collaborated on principles for responsible AI use, articulating guiding values such as no automated decision-making and privacy by design. FinCo’s board then requested a comprehensive enterprise AI policy to translate those values into enforceable rules, which took close to a year and involved hundreds of stakeholders. For use case oversight, FinCo established AI Review Committees (ARCs): people organized in formal cross-functional structures with clear decision rights. The ARCs reviewed proposals through a repeatable process in which risk ratings determined routing. Regional ARCs handled low- and medium-risk cases monthly; a corporate ARC reviewed high-risk proposals quarterly. Finally, FinCo also built controls into its platforms, such as FinGPT, a secure internal wrapper for approved large language models designed for safe experimentation.

By any conventional measure, FinCo’s governance was thorough. A year later, innovation had ground to a halt. The comprehensive policy was already outdated by the time it was complete. Low-risk proposals stalled for months in ARC review cycles (for example, a low-risk agent prototype involving no sensitive data took six months to be approved). Employees needed sign-off from both the legal team and the relevant ARC just to access FinGPT, a platform that was already designed for safe experimentation. Business sponsors of GenAI initiatives faced committees where risk-oriented voices consistently outnumbered those advocating for pursuing new opportunities. Frustrated teams reverted to unsanctioned GenAI tools. GenAI vendors pitched solutions directly to business leaders who couldn’t get traction through official channels. Shadow GenAI—the unauthorized use of GenAI tools and solutions—spread more widely than before.[foot]For more on shadow GenAI, see N. van der Meulen and B. H. Wixom, “Managing the Two Faces of Generative AI,” MIT CISR Research Briefing, Vol. XXIV, No. 9, September 2024, https://cisr.mit.edu/publication/2024_0901_GenAI_VanderMeulenWixom.[/foot] The board, recognizing that FinCo had swung from unchecked adoption to paralysis—only to see unsanctioned use return—issued an urgent mandate to redesign the organization’s governance approach.

FinCo had mechanisms in every domain. The question is whether those mechanisms were suited to GenAI’s unique demands. Traditional governance assumes stable technologies, predictable risks, and manageable demand for a new technology. GenAI upends these assumptions. Its natural language interface, ubiquitous availability, and broad applicability fuel adoption that outpaces any organization’s capacity for centralized review. Simultaneously, probabilistic models, performance drift, and compounding risk across interdependent components create a risk space that shifts faster than leaders can anticipate. As one FinCo executive observed, “Governance designed for technologies with 50-year life cycles doesn’t work when the technology itself transforms every 18 months.”